Companies possess vast amounts of internal knowledge, ranging from HR policies and technical manuals to detailed customer data. Making this information accessible to employees and customers via AI chatbots presents a significant opportunity. However, this also carries risks, such as providing outdated or incorrect answers. This is where Retrieval-Augmented Generation (RAG) comes into play as a transformative technology. RAG enhances Large Language Models (LLMs) by connecting them to authoritative, external knowledge databases. This approach ensures that a chatbot uses the most up-to-date, verified information when responding, making it a powerful tool for any business.

Understanding Retrieval-Augmented Generation (RAG)

At its core, Retrieval-Augmented Generation (RAG) is a technique designed to improve the accuracy and reliability of generative AI models. Instead of relying solely on its static training data, an LLM equipped with RAG first retrieves relevant information from a specific knowledge source. This could be a company’s internal database, a collection of guidelines, or even a live news feed.

Imagine an LLM as a brilliant but sometimes forgetful new employee. It has a broad understanding but lacks specific, up-to-date company knowledge. RAG acts as the company’s internal library, providing the new employee with exactly the documents they need to answer a question correctly. This process fills a critical gap in how standard LLMs operate and reduces the likelihood that the model will “hallucinate” or invent information.

The growing popularity of AI means that more and more companies are looking for ways to use it without falling into common pitfalls. The problem of shadow AI—unofficial AI tools used by employees—often stems from the need for better access to information. By implementing a reliable internal tool using RAG, companies can offer a secure and effective alternative.

How does Retrieval-Augmented Generation (RAG) work?

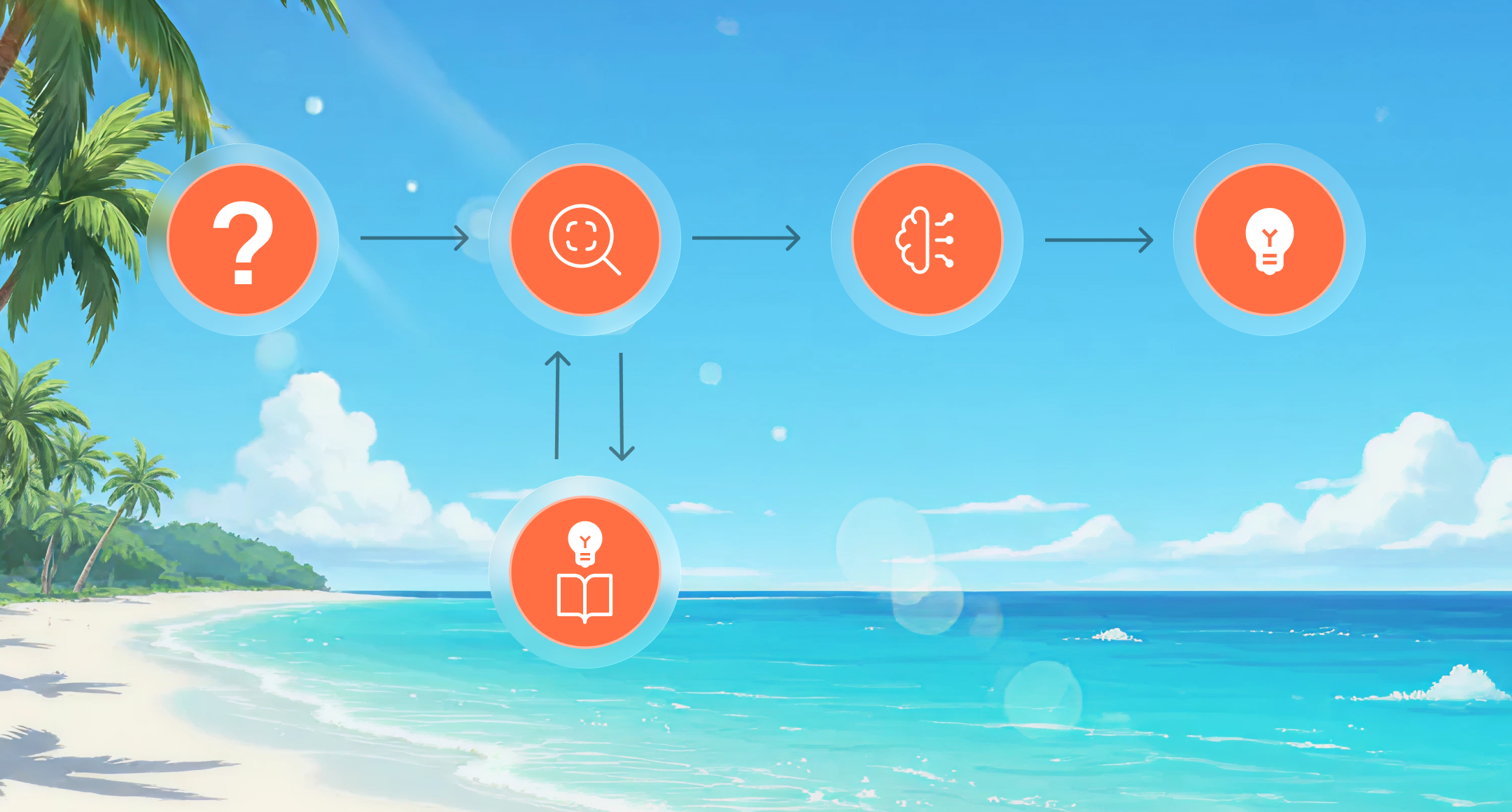

The RAG process can be broken down into a few key steps. It is a sophisticated yet elegant solution to a common AI problem. The definition of Retrieval-Augmented Generation (RAG) encompasses two main phases: retrieval and generation.

- Retrieval phase: When a user sends a query to the chatbot, the system does not immediately forward it to the LLM. First, it uses a retrieval component to search a specific knowledge base. This knowledge base, often a vector database, contains numerical representations (embeddings) of your company’s documents. The system finds the information most relevant to the user’s query.

- Augmentation phase: The retrieved information is then appended to the original user query. This augmented query provides the LLM with the necessary context to formulate its response.

- Generation phase: Finally, the LLM generates a response based on both its internal knowledge and the new, context-rich information. This response is more accurate, more specific, and grounded in your company’s actual data. This approach helps build user trust, as the model can often cite its sources, clearly showing where the information comes from.

The Business Case for RAG in Enterprise Environments

Integrating AI into business operations requires a clear strategy. Simply introducing a generic chatbot can create more problems than solutions. For those considering a roadmap for introducing AI into your company, understanding technologies like RAG is crucial for successful implementation.

Improving Data Security and Control

One of the biggest concerns for companies is data security. Sharing sensitive corporate data with a third-party model is a no-go for most. RAG offers a solution by keeping proprietary data separate from the LLM. The model does not need to be retrained using your data; it only accesses small, relevant snippets based on individual queries.

This architecture enables robust access controls. You can implement permissions so that the RAG system retrieves only documents that a specific user is authorized to view. This ensures that sensitive information, such as financial reports or employee data, remains protected. As companies navigate the complexities of data management, establishing strong data governance for RAG is a crucial step.

Improving Accuracy and Reducing Hallucinations

LLM hallucinations—confident but incorrect answers—can severely damage a company’s reputation. Air Canada learned this lesson the hard way when its chatbot provided incorrect information about bereavement fare policies—an error the airline was legally obligated to comply with.

The RAG (Retrieval-Augmented Generation) technique addresses this problem head-on. By grounding the model’s responses in a verifiable knowledge base, the risk of it inventing facts is significantly reduced. The model is instructed to base its response on the provided documents, making the output more reliable and trustworthy.

Cost-effective and scalable implementation

Fine-tuning an LLM on a custom dataset is a computationally intensive and time-consuming process. RAG offers a significantly more cost-effective alternative. Instead of retraining the entire model, you simply need to maintain and update your knowledge base. This is a far simpler and more cost-effective task.

As your company’s knowledge evolves, you can easily add new documents or update existing ones. The RAG system can then immediately utilize this new information without causing any downtime for the model. This scalability makes it a practical choice for dynamic business environments.

Practical Use Cases for RAG-Powered Chatbots

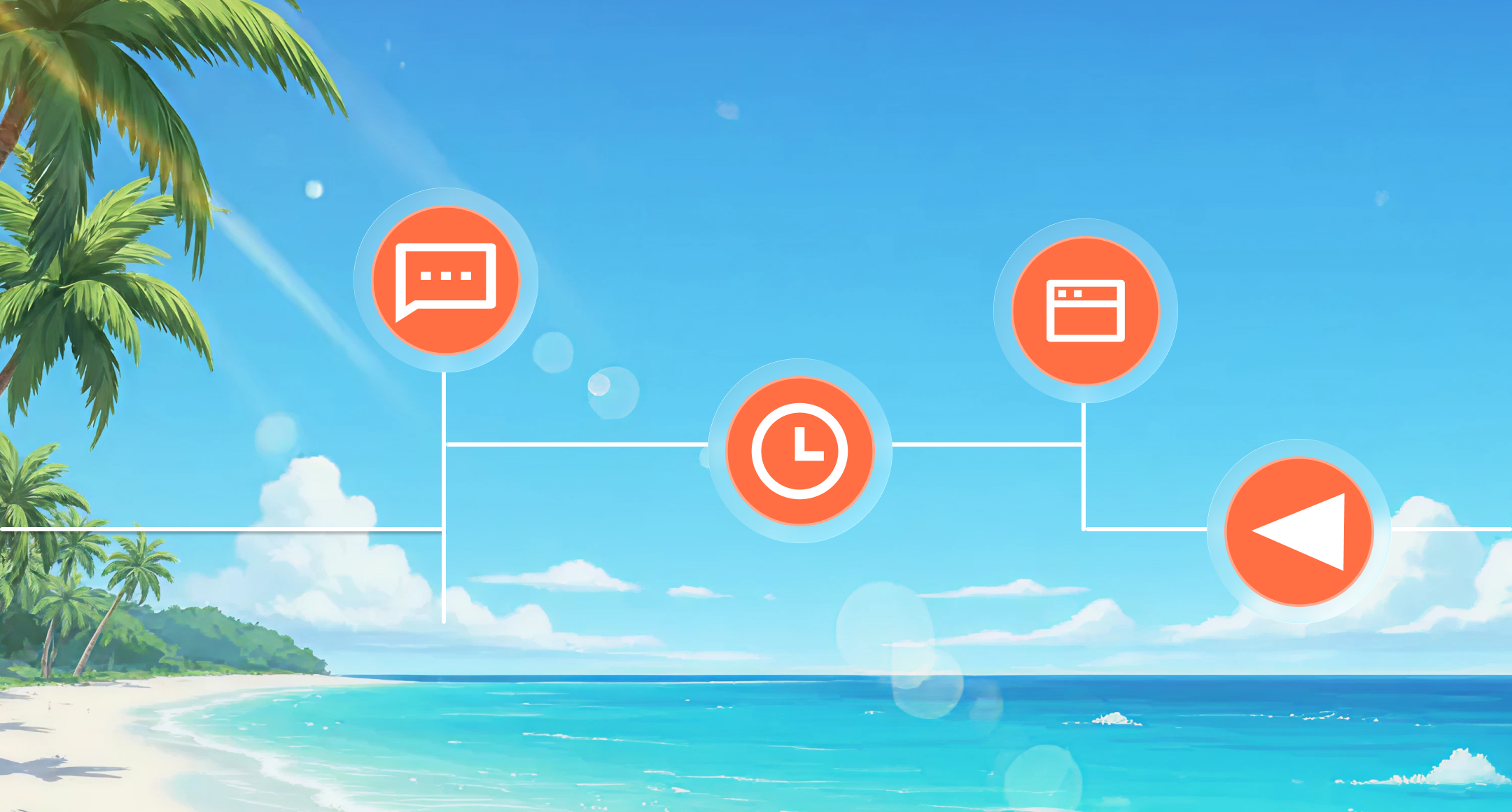

The potential applications for RAG within a company are diverse. Nearly every department can benefit from faster and more accurate access to information.

Human Resources and Employee Onboarding

New employees are often overwhelmed by information. A RAG-powered HR chatbot can act as a personal assistant, answering questions about company policies, benefits, and internal processes. Instead of sifting through a cluttered intranet, an employee can simply ask, “What are our parental leave policies?” and receive a concise, accurate answer sourced directly from the latest HR documents.

Automating Customer Support

Customer support teams handle repetitive questions every day. A RAG-powered chatbot can handle a large volume of these inquiries and provide immediate, precise answers regarding product features, troubleshooting steps, or return policies. This frees up human agents so they can focus on more complex customer issues. For a deeper look into this topic, an introduction to the OpenAI AgentKit can demonstrate how these systems are built.

Internal Knowledge Management for Technical Teams

Engineers and developers need quick access to technical documentation, coding standards, and project histories. A RAG system can serve as a powerful internal search engine, enabling a developer to ask complex questions such as: “What are the best practices for deploying microservices in our AWS environment?” and receive an answer compiled from internal wikis, code repositories, and best-practice guides. This approach can significantly improve productivity and knowledge sharing.

Conclusion

Introducing AI into your business doesn’t have to be a leap into the unknown. With the right architecture, you can develop powerful and secure tools that deliver real value. The Retrieval-Augmented Generation (RAG) framework offers a pragmatic path forward, enabling companies to harness the power of LLMs while maintaining control over their data and ensuring the accuracy of the information provided. By basing chatbots on your own corporate knowledge, Retrieval-Augmented Generation (RAG) transforms them from unpredictable novelties into reliable, indispensable business tools.

FAQs

What is Retrieval-Augmented Generation (RAG)?

Retrieval-Augmented Generation (RAG) is an AI technique that enhances large language models. Before generating a response, it retrieves factual information from an external knowledge base, ensuring that the response is accurate, up-to-date, and based on reliable data rather than solely on the model’s training.

How does RAG improve chatbot security?

RAG improves security by separating your proprietary data from the LLM. For each query, the model accesses only small, relevant data snippets and can be configured with user-based permissions, preventing unauthorized access to sensitive information without exposing the entire dataset.

What is the main difference between RAG and fine-tuning?

RAG provides a model with new information externally at the time of a query. Fine-tuning, on the other hand, retrains the model’s internal parameters using a new dataset. RAG is more cost-effective and easier to keep up to date than fine-tuning.

Can RAG completely eliminate AI hallucinations?

While RAG significantly reduces the risk of hallucinations by grounding answers in facts, it may not be able to eliminate them entirely. The quality of the response still depends on the quality and relevance of the retrieved information, as well as the LLM’s ability to synthesize it.

What is a vector database, and why is it used with RAG?

A vector database stores data as numerical representations, known as embeddings. It is used with RAG because it enables fast and efficient semantic search, allowing the system to find the most contextually relevant information for a user’s query, even without exact matches to search terms.

Is implementing a RAG system difficult for a company?

The level of difficulty depends on the existing infrastructure. However, many platforms now offer managed services that simplify RAG implementation. Tools such as Amazon Bedrock and various open-source libraries have made building and deploying RAG systems more affordable for companies of all sizes.