Workflow automation is often discussed as a means of improving efficiency. In reality, however, it is crucial for the stability of operational processes.

In reality, it is a matter of organizational maturity. Organizations rarely automate because they “don’t have enough time.” They automate because processes are not running smoothly: too many handoffs, too many exceptions, too little transparency. Without a clean design, automation actually exacerbates the very problems it is meant to solve.

The real value does not come from tools or individual use cases. It comes from clear states, defined responsibilities, reliable data, and transparent decisions. That is why the central question is not what can be automated. Rather: Which processes drive the business and are simultaneously vulnerable enough to benefit from automation?

What Workflow Automation Really Means

Workflow automation is not an “if X, then Y” script. It is the ability to execute recurring processes reliably, transparently, and scalably, even—and especially—when errors occur, data is incomplete, or systems fail to respond.

Every automated workflow follows the same logic:

- a trigger (event)

- processing (transformation/enrichment)

- a decision (rule or model)

- an action (system update, communication, approval)

Two misconceptions almost always arise:

Misconception 1: Automation primarily saves time

Time savings are a byproduct. The real value lies in stability, reproducibility, and auditability.

Misconception 2: AI replaces process design

AI can support decision-making, but it cannot replace process logic. Without clear states, rules, and responsibilities, it increases uncertainty rather than reducing it.

Which workflows are truly worthwhile

Not every process is a good candidate for automation. The decisive factor is the combination of impact and feasibility.

A workflow is worthwhile when several factors come together: high frequency, meaning a sequence that occurs regularly. Significant error costs, where manual errors have noticeable consequences. Clear or at least structurable decisions that can be translated into rules or models. Multiple system transitions where information is currently transferred manually. And a measurable output against which success can be objectively evaluated.

In practice, a clear pattern emerges: it is not the most complex process that provides the greatest leverage, but rather the one that occurs frequently and is simultaneously prone to errors. This is where the greatest difference lies between selective automation and genuine operational improvement.

Concrete examples of workflow automation

In document and knowledge processes, the leverage comes primarily from structure: When content is automatically classified, enriched with metadata, and linked to deadlines or obligations, the effort required for searching decreases noticeably and decisions become traceable. This is particularly evident in knowledge-based systems that generate answers only from verified sources, thereby reducing reliance on individual people.

In finance processes, the effect lies less in speed than in error prevention and audit trails. Automated processing of incoming invoices, consistent approvals, and systematic reconciliation across multiple systems ensure that decisions become reproducible and can be substantiated in an audit.

In the HR context, automation is less a lever for efficiency than a means of risk control. Standardized onboarding and offboarding processes, clean document flows, and structured pre-qualifications lead to consistent workflows and clear responsibilities.

In IT, the difference is most evident: incident triage, automated responses to known errors, and structured change processes increase not only speed but, above all, stability.

Here, automation becomes a means of keeping systems manageable, not just making them more efficient.

In marketing and sales, the benefits stem from improved controllability. Leads are consistently routed, quotes are created in a reproducible manner, and content is managed through clear approval processes. Speed increases—but only because the underlying workflows are clearly defined.

Why automation fails in practice

Many automation efforts fail not because of the use case, but due to a lack of operational logic. Typical pain points include missing error paths for retries, timeouts, or escalations; duplicate executions; unclear responsibilities; uncontrolled data flows; and a lack of logging. Idempotence is also frequently missing: The same process must be triggered multiple times without generating duplicate entries, tasks, or data states.

The crux lies in the transition from demo to production. A demo shows the ideal case. In production, what counts are edge cases, side effects, and incomplete data. This is where it is decided whether a workflow runs stably or generates additional effort.

What Really Matters: Process Design Over Tool Selection

The mistake usually happens earlier: a tool is selected before the process is clearly defined. Yet these questions must be clarified first: What triggers the workflow? What states exist? Who makes decisions? When is an issue escalated? What data is mandatory? Only once these points are clear can a sensible decision be made about how the process should be implemented.

Operation & Measurability: The Underestimated Part

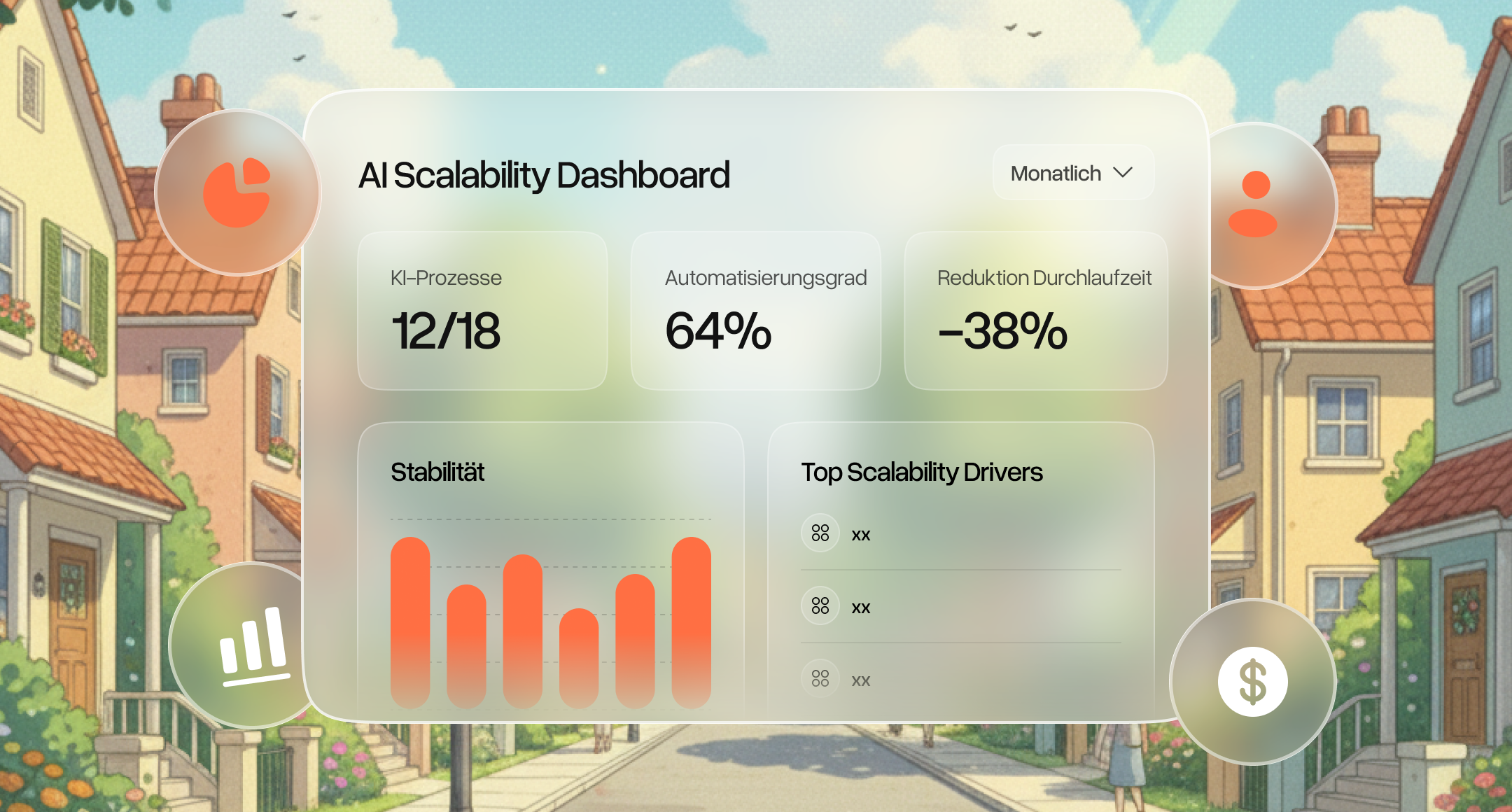

Automation isn’t complete once the system goes live. It’s only at this point that it becomes clear whether a workflow runs stably under real-world conditions. Is the process measurable and controllable? This primarily involves four key metrics: Throughput time shows how quickly a process runs from trigger to completion. Error rate reveals how often manual intervention is required. Rework rate shows how often results need to be corrected or re-initiated. SLA compliance shows whether defined deadlines and response times are actually being met.

In addition, three things are needed: monitoring for errors, retries, and outages; audit trails for decisions and status changes; and clear escalation paths when a process gets stuck or delivers inaccurate results.

With automation, responsibility shifts: decisions are made in a reproducible manner and must therefore also be explainable in a reproducible way. This applies not only to audit trails but also to data access, approvals, and escalations.

Conclusion

Workflow automation distinguishes clean processes from digitized improvisation. As long as workflows rely on ad-hoc requests, Excel, email forwards, and individual experience-based knowledge, automation does not accelerate the process but rather its weaknesses. Then tasks are duplicated, approvals stall, data contradicts itself, and errors are only noticed once they have already taken effect.

The real lever, therefore, does not lie in the flow itself. It lies in the groundwork: clear triggers, unambiguous states, defined responsibilities, reliable data, and an operation that does not conceal deviations but catches them. This is where many projects fail after the pilot phase.

Those who take workflow automation seriously do not simply automate tasks. Instead, they select processes that are frequent, error-prone, and business-critical. Through automation, these are built to remain stable even under real-world conditions. The next sensible step is therefore not a tool demo, but an honest assessment: Which processes are relevant enough to be properly designed, measured, and operated?

FAQs

How do you get started with workflow automation in a meaningful way?

A meaningful start does not begin with a tool, but with the selection of suitable processes. Key factors include high frequency, significant error costs, multiple system transitions, and a clearly measurable output. Small, clearly defined workflows deliver results quickly but also reveal early on whether process logic, data quality, and responsibilities are viable.

Who should be responsible for workflow automation in the company?

Workflow automation is neither a purely IT issue nor a departmental project. It typically lies at the intersection of business units, IT, and compliance. In practice, clear responsibilities are needed across three levels: process logic (business unit), technical implementation (IT), and governance/operations (cross-functional).

What distinguishes a working demo from stable automation?

A demo represents the ideal scenario. Stable automation continues to function even in the face of errors, incomplete data, and system failures. The difference lies in error handling, monitoring, and clearly defined processes.

Why do many automation projects fail after the pilot phase?

Because organization, governance, and operations are not taken into account. The pilot demonstrates technical feasibility, but not how processes will be managed and accounted for in the long term.

What role does process design play in automation?

Process design determines whether automation functions reliably. Without clear states, responsibilities, and decision-making logic, chaos ensues—regardless of the tool used.

When does automation become a risk?

When processes are not traceable, data flows unchecked, or errors go unnoticed. In such cases, automation does not scale efficiency, but uncertainty.