Art first glance, prompt engineering looks like a new form of copywriting: a few clever phrases, and an AI model delivers better results. In practice, however, value rarely comes from “better words,” but from better specifications. A prompt is the interface between specialist requirements and a system of model, context, data access and (often) tools. And this is where it gets tricky. Models are often tested like a demo, but run like a product, without clear success criteria, without testing, without versioning, without guardrails and without responsibilities. At the same time, expectations in sales, operations, HR and compliance are increasing: Answers should be consistent, decisions comprehensible, risks manageable, effects measurable. Prompt engineering is therefore not so much a “prompt bag of tricks” but a discipline that brings together technology, process design and governance.

What is prompt engineering — and what isn't?

Prompt engineering means the systematic design of inputs, context and boundary conditionsso that a language model reliably performs a task in the desired form. “Prompt” does not just mean a block of text, but a specification:

- Task (what should happen?)

- Context/data (what can be derived from?)

- Rules (what is allowed/prohibited?)

- Format (what should the result look like?)

- optional: tool/data access (which actions are allowed?)

Typical misunderstandings

Misconception 1: Prompt engineering is “the right wording.”

In productive settings, eloquence counts less than reproducibility. If results cannot be tested, they cannot be controlled. Hard contracts (e.g. JSON scheme, allowed values, length limits, citation requirements) are therefore often more important than fine-tuning wording.

Misconception 2: Prompt engineering replaces data and process work.

Many problems are not model problems, but Context issues: incorrect document version, missing metadata, unclear responsibilities, inconsistent taxonomy, lack of authorization logic. Prompting can conceal such gaps, but it cannot permanently close them.

Areas of benefit/use cases: Where prompt engineering works in the company

The biggest lever lies where repeatable tasks lie on the border between information and action: Understanding content, outputting it in a structured manner, initiating workflows, documenting decisions.

1) Structured Extraction & Classification (Documents, Tickets, Emails)

Invoice processing example

The model extracts the invoice number, amount, date, payment term, supplier and cost center and outputs the results strictly as JSON according to a validatable scheme. Plausibility checks then validate the data (numeric amount, date in the correct format, cost center available). The result: less manual entry and fewer errors — but only if fields and rules are clearly defined.

2) Knowledge Q&A with source binding (RAG instead of “free answers”)

Example HR guidelines & internal processes

For questions such as “How does the travel allowance work? “does a retrieval step provide the appropriate policy or wiki excerpts in a targeted manner. The model formulates the answer exclusively on the basis of these excerpts and provides them with source references. In a retrieval-augmented generation (RAG) setup, this prevents general world knowledge from overlapping internal rules. As a result, the proportion of “sounds plausible” answers decreases and comprehensibility increases. There is no alternative if processes must be described consistently and in an audit-proof manner.

3) Tool/workflow control (function calling instead of “agent does something”)

Example of CRM data maintenance in RevOps

In CRM data maintenance, the model is not used as an “acting agent,” but as a decision layer with clear limits: It recommends structured actions such as “update contact,” “change deal stage,” or “create task to owner.” The execution is carried out using a tool layer with allowlist and privileges, so that only permitted fields and operations are possible. This creates controlled automation and a comprehensible, auditable trail.

Risks & Failure Modes: Why it tips over without engineering

This is the difference between demo and operation: Prompt engineering without a risk picture usually produces three types of damage: incorrect content, uncontrolled data flows or unstable processes.

Data quality and context limits

Many errors are context errors. Answers may appear formally correct, but relate to the wrong version of the document or incomplete excerpts. Inconsistent terms (such as “customer”, “client”, “account”) make classification and extraction difficult because taxonomy and fields are not properly normalized. In addition, there is the practical limit of the context window: Long documents are abbreviated, relevant passages are omitted, but the model provides a seemingly reliable but actually incomplete answer.

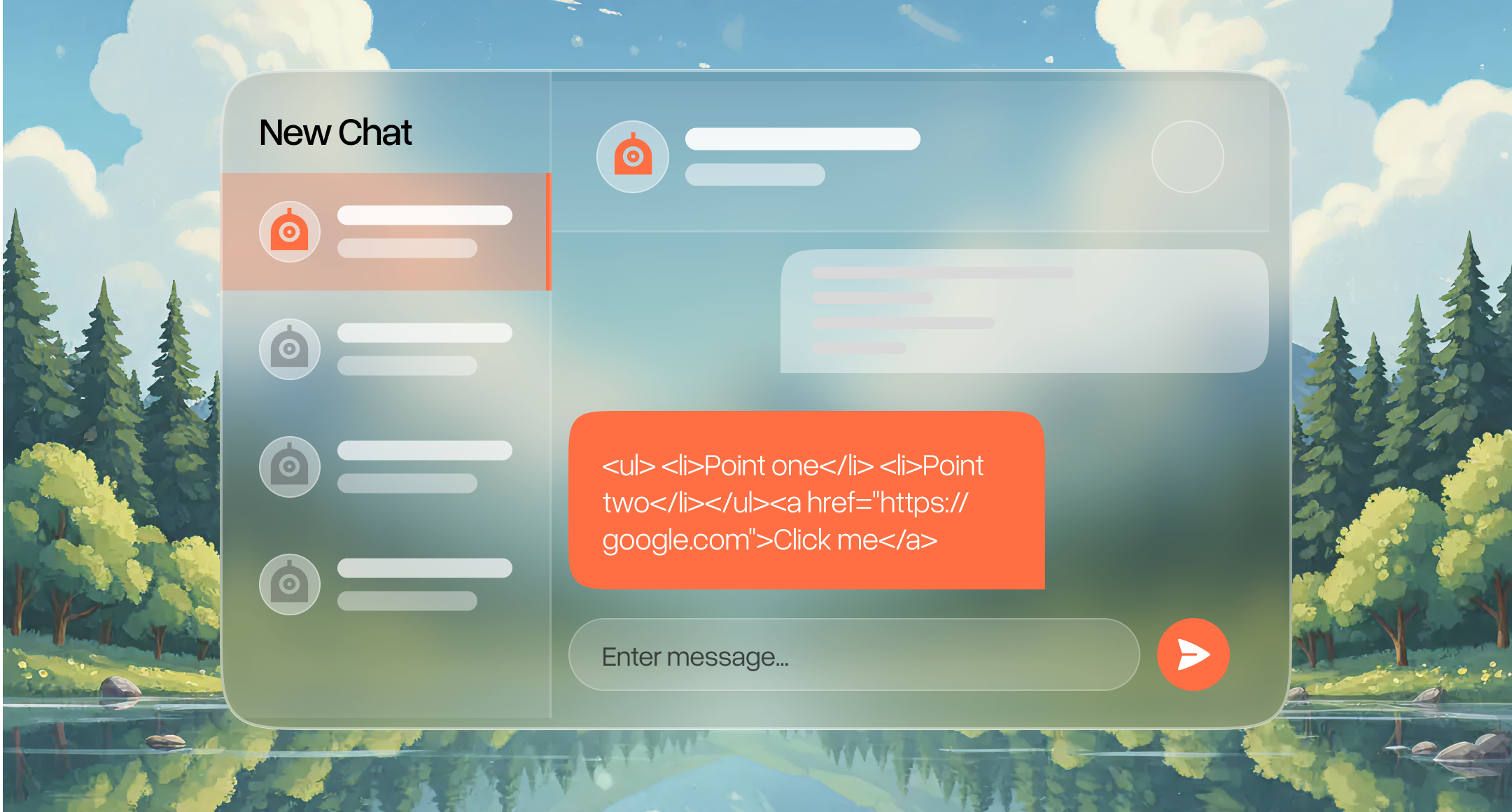

Security: Prompt injection and unsafe outputs

Prompt injection is a classic attack vector: Documents, emails, or web pages contain instructions such as “Ignore rules...” and try to push the model out of the box. Without a clear separation between systemic rules and untrusted input, this quickly becomes unstable. In addition, there is a risk of insecure output processing when HTML, SQL, or Markdown migrates unchecked to downstream systems. Budgets, allowlists and access controls are required at the latest when using tools, otherwise cost and risk outliers arise as a result of unchecked actions.

Compliance/data protection: permissions, logging, sensitivity

As soon as sensitive information is processed, the technical enforcement of authorizations determines the viability of the approach: Least Privilege is not a nice-to-have, but a prerequisite for avoiding “AI as a data outflow.” Logging must follow privacy-by-design and must not unnecessarily reproduce content. Sensitivity labels and DLP become relevant as soon as information circulates between systems and the question of what can be shared, stored or further processed is raised.

Change and operation: acceptance, responsibility, monitoring

Without clear roles (owner, reviewer, company), shadow processes arise: individual teams build up prompt collections, results drift apart, responsibility remains diffuse. Without training, inconsistent working methods arise. And without monitoring, it remains invisible whether the quality is actually increasing.

Decision framework: Scorecard instead of gut feeling

A pragmatic scorecard prevents prompt engineering from becoming an end in itself, i.e. from being carried out without a concrete benefit or measurable goal behind it. Each point is rated on a scale of 1 to 5 (1 = poor/high-risk, 5 = very good/manageable):

- Suitability of the task: clearly distinguishable, repeatable, definable result format

- Data situation: reliable sources, versioning, metadata, access control

- Risk profile: amount of damage due to errors, regulation, sensitivity

- Effort: prompt/template, integration, testing, change, operation

- Measurability: KPIs, golden set, acceptance criteria, monitoring possible

Rule of thumb: High suitability + good data + high measurability beat “exciting” use cases with unclear data.

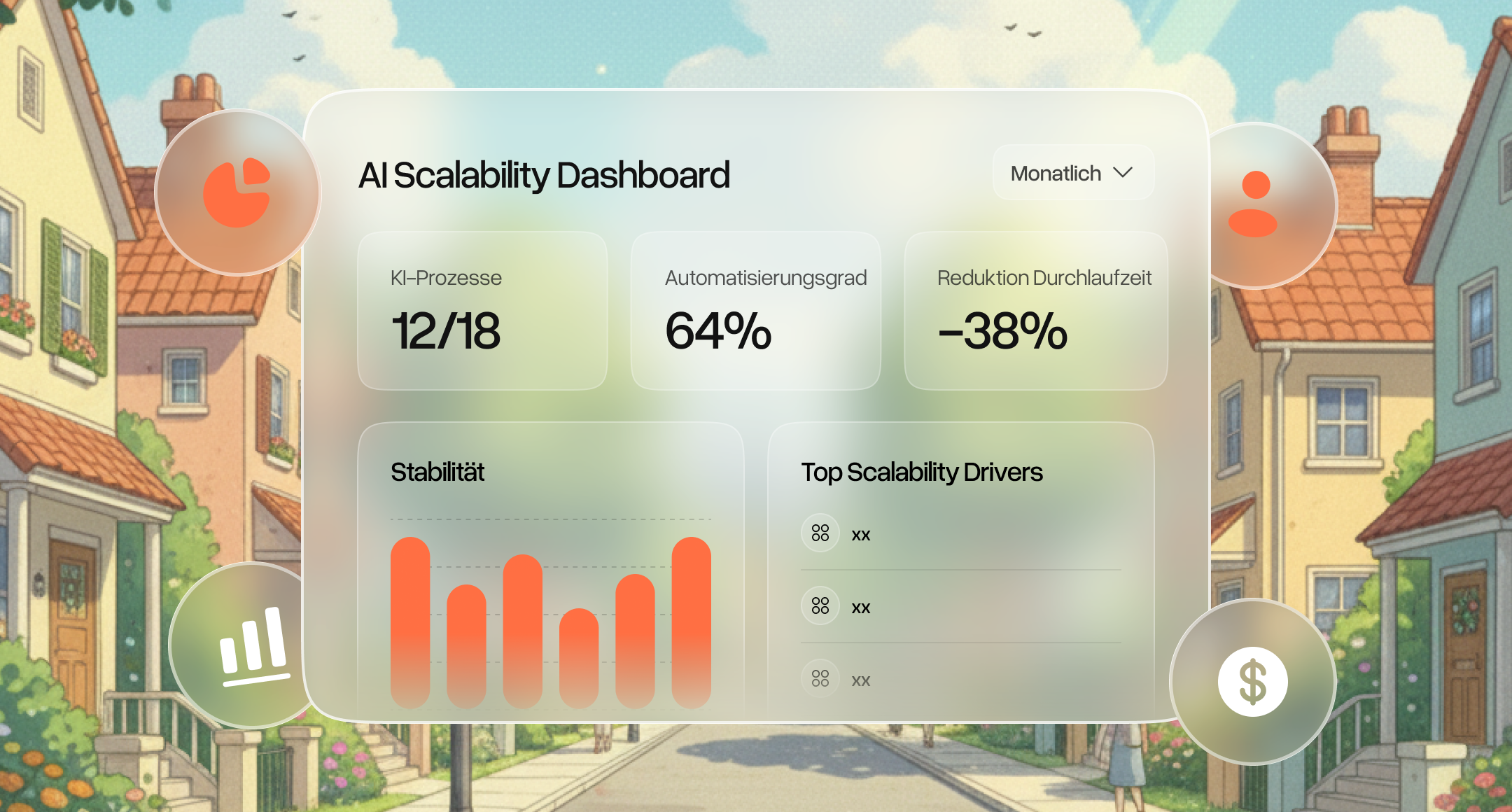

Operation and measurability: When prompt engineering becomes a product

In practice, a few robust indicators work: Format compliance measures how many outputs are formally correct (scheme, mandatory fields, permitted values). Quality is represented by field accuracy during extraction, “faithfulness” in Q&A and a review rate that shows how often human control is necessary. Efficiency can be controlled by processing time, manual rework and costs per case. In addition, risk indicators such as identified prompt injection patterns, DLP events and a remarkably high “UNCLEAR” rate provide clues as to whether data and context gaps rather than prompt wording are the problem.

Logging records comprehensibly what the system did: which version of the instructions was used, which model was in use and whether the result was good or problematic. In particular, key technical data is stored; content itself is only collected if there is a clear purpose and a data protection concept. Serves as a “safety anchor” Human-in-the-Loop (HITL). Sensitive actions are approved by people, unclear cases are collected in a targeted manner so that rules, data and templates can be improved. Drift management describes ongoing maintenance: When data, processes, or the model change, tests and protection mechanisms must be adapted so that quality remains stable.

Conclusion: What can Prompt Engineering do?

Prompt engineering is not “prompt magic,” but an engineering discipline: Requirements are translated into a specification, outputs are stabilized by contracts (format, source binding), risks are limited by guardrails and authorizations, quality is made measurable through testing and monitoring. The change of perspective lies in the ownership shift: It is not the model that is the bottleneck, but the data situation, roles, governance, process design and operation. This is where it is decided whether AI provides reliable support in sales, operations, HR or compliance or creates new uncertainty. A solid scorecard framework prevents actionism and makes prioritization understandable.

FAQ

What is prompt engineering?

Prompt engineering means formulating and limiting tasks (context, rules, output format) in such a way that an AI model delivers reliably. It is about clear guidelines instead of “nice words.”

Which prompt engineering techniques are most important in companies?

Techniques that make results verifiable are important: structured output (schema/JSON), source binding for knowledge questions, and a clear separation of rules versus data. Actions require controlled tool use with permissions/allowlists.

What does a Prompt Engineer do in practice?

The role translates technical requirements into testable specifications, builds templates and test cases (evals) and ensures stable quality. This includes integration with processes and guardrails for security and compliance.

How do you become a Prompt Engineer?

Relevant components include LLM basics, prompt/system design (contracts, retrieval, tool use) and operation (testing, versioning, monitoring). Practice is created through measurable use cases such as extraction or source Q&A.

When is prompting no longer enough — and then what?

When quality fluctuates despite clean prompts, it is usually due to data/context gaps or a lack of controls. That's when better sources/retrieval, stricter validation, and secure tool/permission design help; fine tuning is more of a later step.